Your Team Is Already Using AI. Give Them Guardrails Before It Spreads.

Small businesses do not need heavy AI governance to start. They need clear tool rules, data boundaries, review gates, and a rhythm for learning from mistakes.

Practical AI and technology guidance

Leaf Lane works like a fractional AI and technology advisor for businesses that need clear direction, practical priorities, and useful systems without adding another full-time leadership role.

We help you sort through the noise, choose the right level of automation, and move from conversation to roadmap to implementation when a workflow is ready.

Choose the right level of help

Best when you want a trusted outside perspective on where AI, automation, or better systems could actually help.

Best when you want that discussion turned into a reviewed diagnostic, prioritized recommendations, and a written action plan.

Assessment pricing is normally $1,000. Right now, the checkout code brings that to $0.

We help decide where modern technology belongs in the business, where it does not, and what should be handled first.

We evaluate tools, workflows, risk, cost, and readiness so you are not chasing every new platform or overbuilding too early.

When an idea is worth pursuing, we can help shape the roadmap, design the workflow, implement the system, and keep improving it.

Small businesses do not need heavy AI governance to start. They need clear tool rules, data boundaries, review gates, and a rhythm for learning from mistakes.

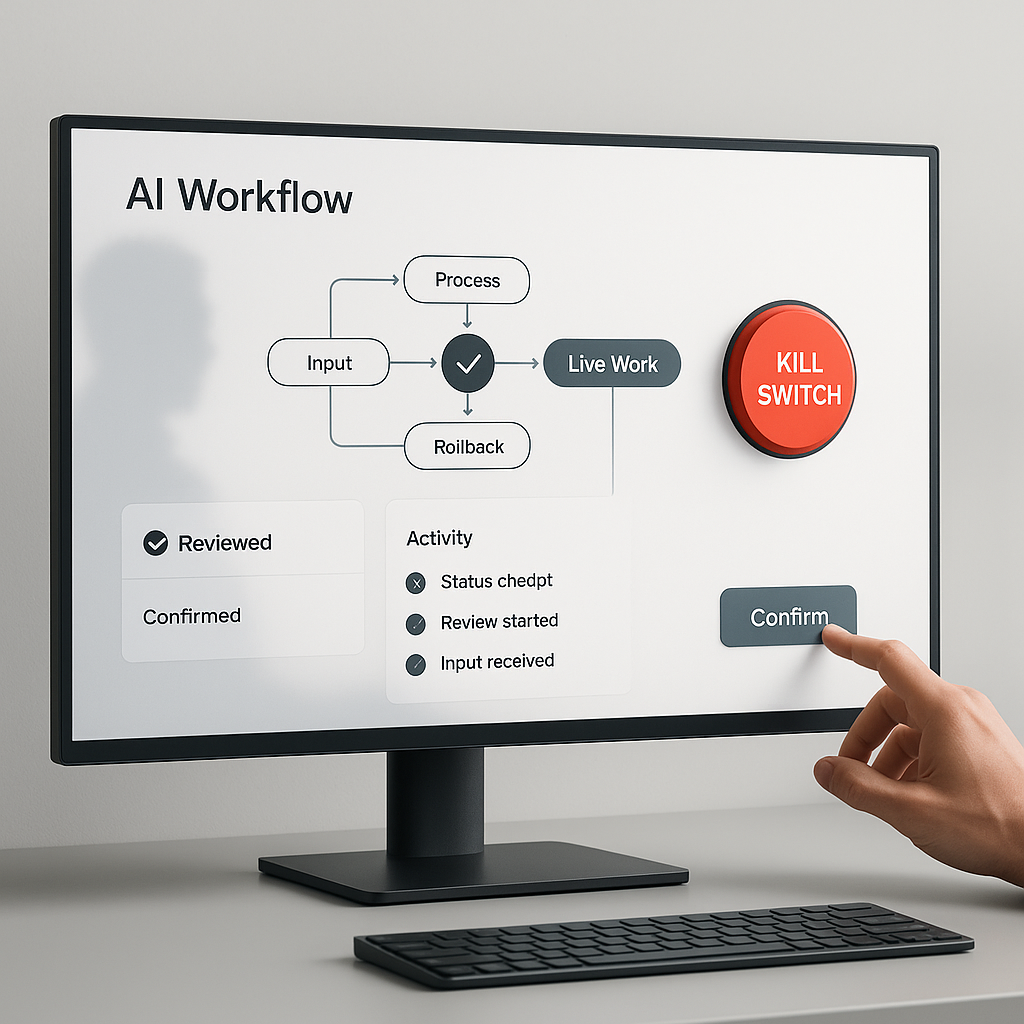

Before an AI workflow touches live work, define the stop conditions, approval gates, logs, and rollback path that keep people in control.

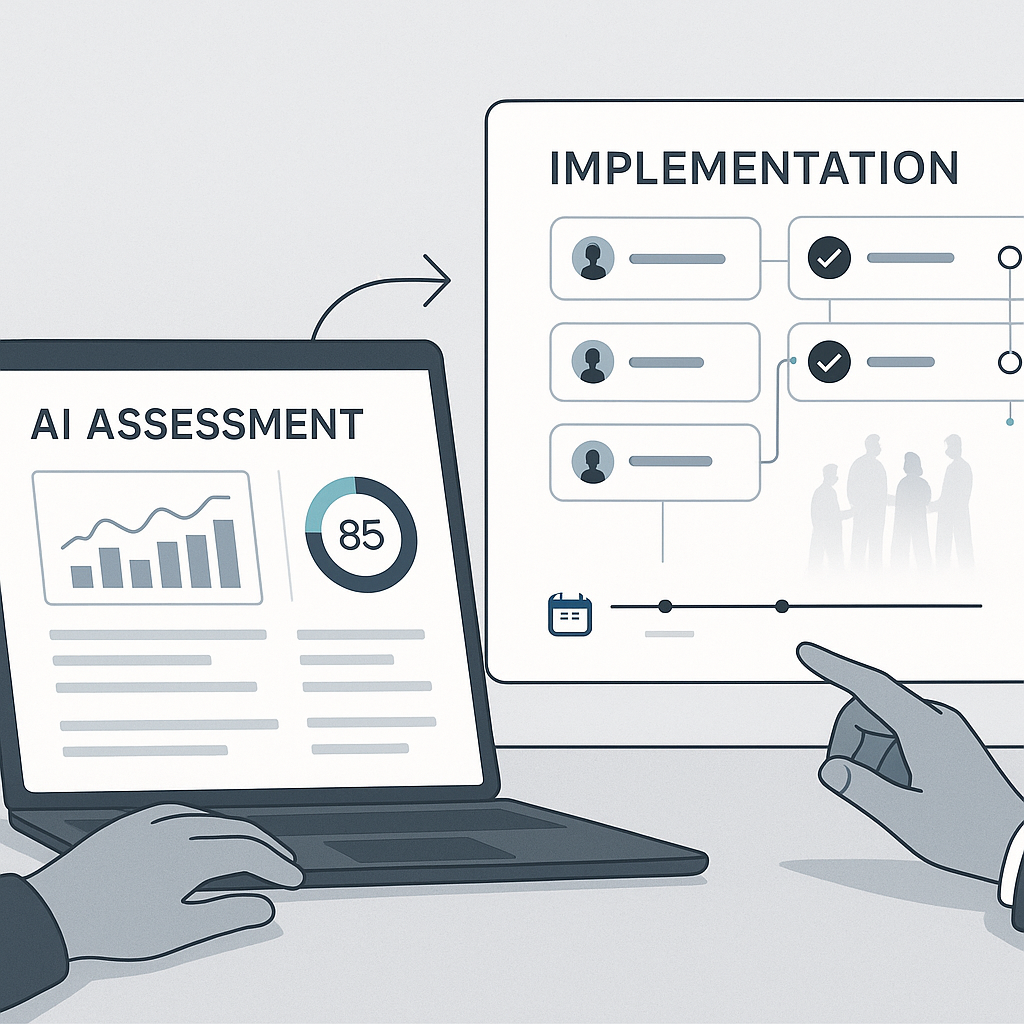

An AI assessment should not end with a polished report. It should turn into owners, approvals, dependencies, and a practical rhythm for implementation.

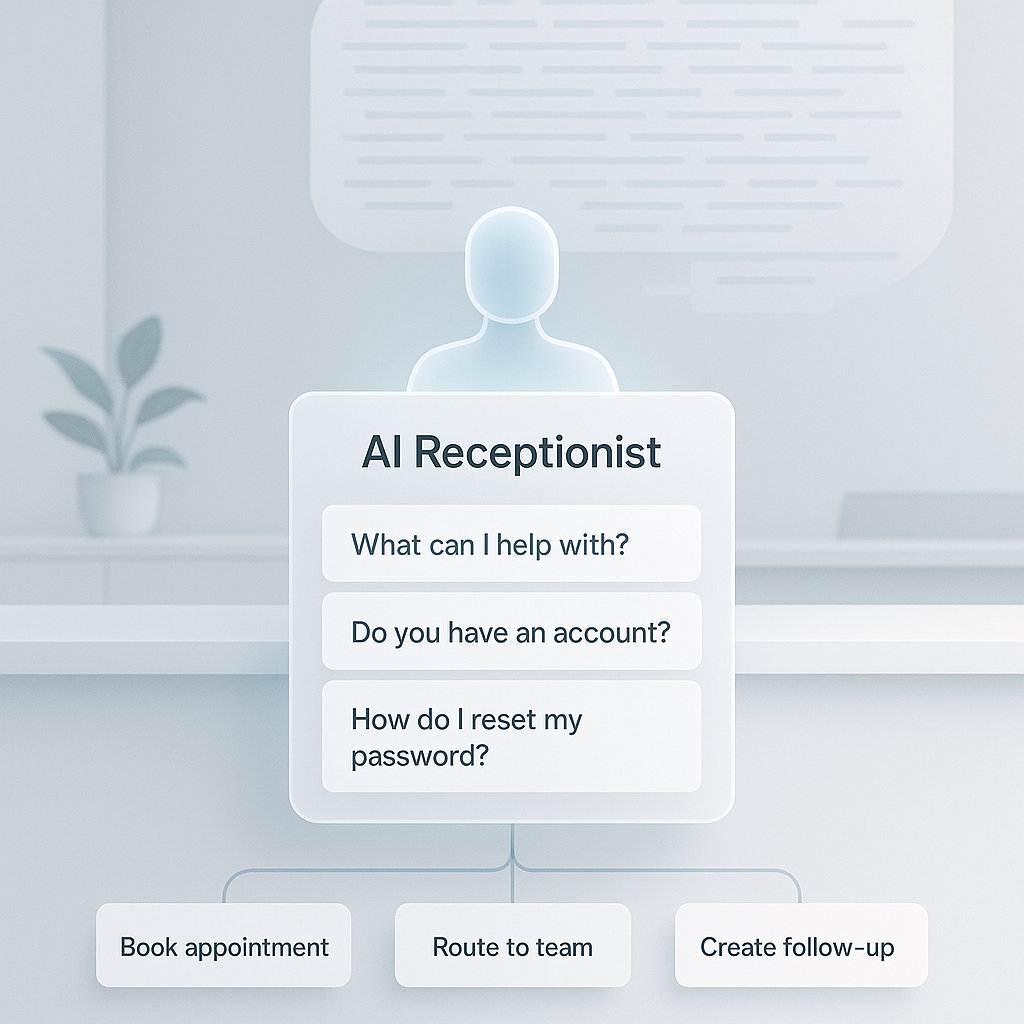

A useful AI receptionist does not collect every possible detail. It asks only what is needed to help the caller, route the work, and create a clean next step.

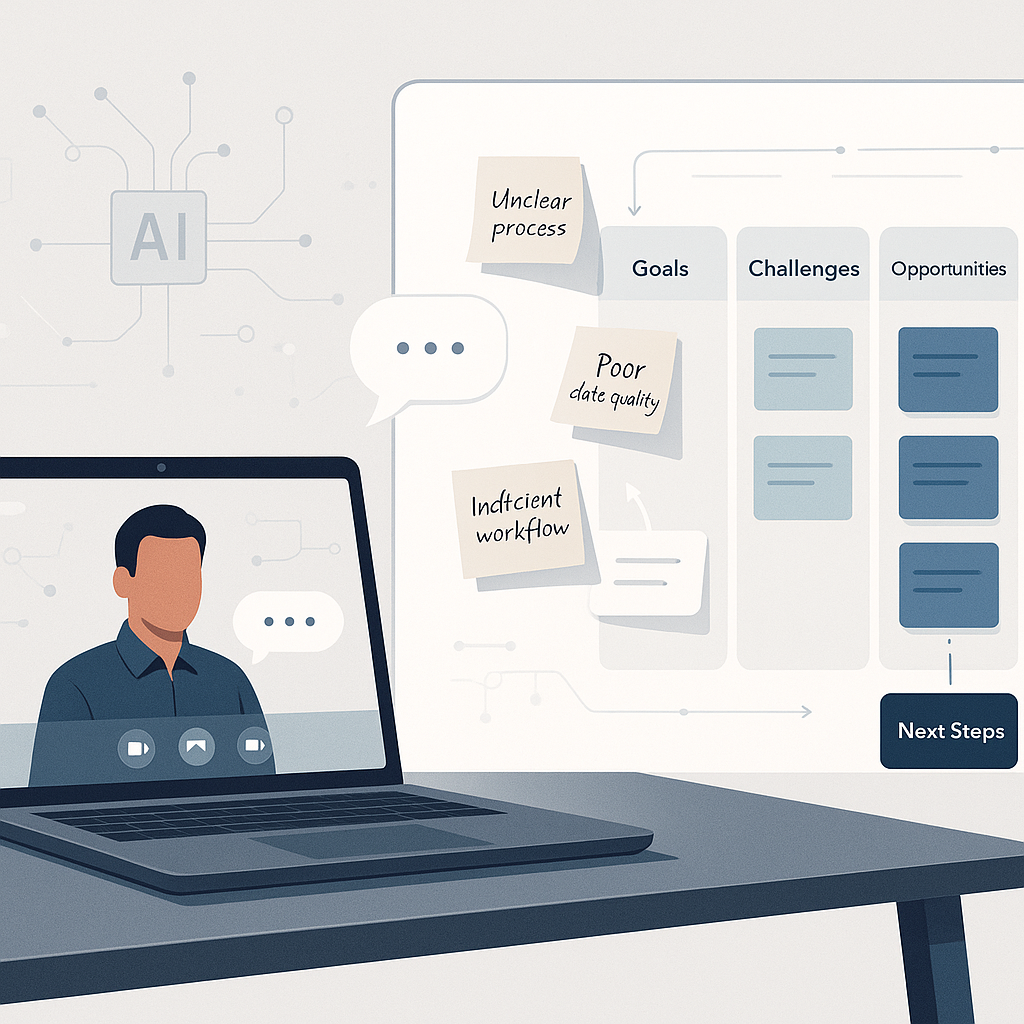

A good AI discovery process should extract real problems, structure them, and turn them into a practical roadmap before anyone starts pitching tools.

Notes

Most teams measure the wrong thing when they start using AI. They count prompts, tools, features, or experiments. The more useful early question is simpler: how many hours did we get back? Pick one task. Time it before AI. Time it after AI. Multiply by frequency. If the time savings are not visible yet, the tool may still be interesting, but it is not yet proving value clearly enough to justify going deeper.

Most people want AI to handle the impressive parts of work first. In practice, the highest-value use cases are usually the boring ones: the weekly report, the invoice cleanup, the follow-up email, the meeting summary, the repeated customer reply. Start with the work that feels repetitive, predictable, and a little annoying. That is often where the first real value is hiding.

Most teams that have been using AI tools for a while are paying for more than they are truly using. A simple AI tool assessment takes about two hours and helps you make better decisions. Start with spend. Pull every AI-related subscription or API charge from the last three months. Then ask two questions for each tool: What job does it actually help with? Who is using it now? That is more useful than asking who has access. For tools that still matter, decide whether they are personal productivity tools or real team infrastructure. If a tool matters across the team, it should have an owner, a reason it was chosen, and a basic standard for how it gets used. The goal is not just to cut spend. It is to understand what your team is actually doing with AI so the next decision is based on reality.

Learning Resources

Tools to Try