How to Manage AI Projects: A Practical Guide for Teams

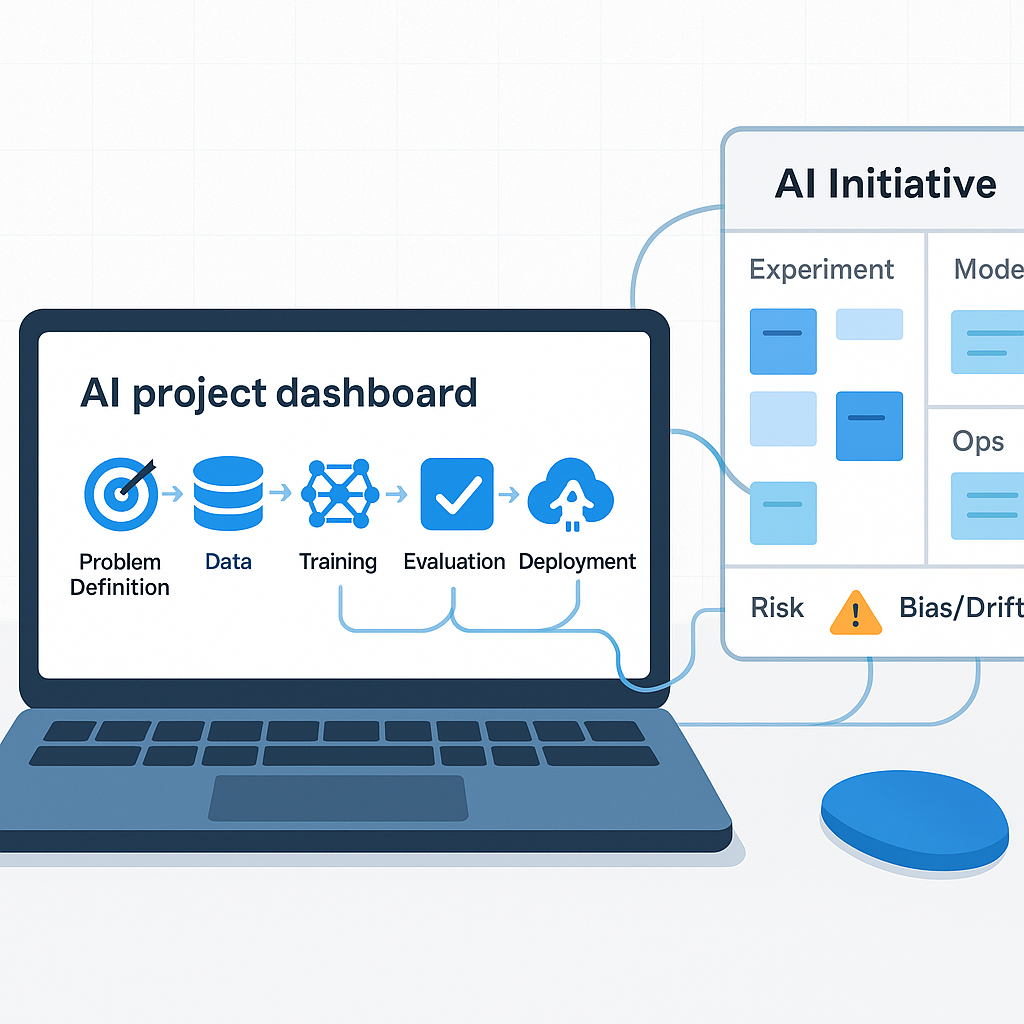

Managing an AI project is not like managing a software sprint. The timelines are fuzzier. The outputs are probabilistic. And the success metrics are often unclear until you are already mid-implementation. For teams running their first AI initiative, this ambiguity creates real risk: scope creep, stalled rollouts, and post-launch disappointment.

This guide gives you a practical framework for managing AI projects from kickoff to production — with concrete steps and the common pitfalls to avoid at each stage.

## Start With the Problem, Not the Tool

The most common mistake teams make is selecting an AI tool before defining the problem they are solving. Someone reads about a new LLM feature, books a demo, and suddenly IT is running a pilot with no clear success criteria.

Before any tool evaluation, write a one-paragraph problem statement: What decision or task is slow, error-prone, or bottlenecked? Who is affected? How often? What does good look like?

This single document becomes your north star for every downstream decision — what to build, what to measure, and when to stop.

## Define the Scope Layer by Layer

AI projects have a tendency to expand. What starts as "automate our meeting summaries" becomes "build a knowledge management system" by week three.

Use a three-layer scope definition:

Core: The minimum viable outcome. What must work for this project to be considered successful?

Adjacent: Features or integrations that would add value but are not required for the initial launch.

Future: Ideas worth capturing but out of scope entirely.

Lock the core before development starts. Revisit the adjacent layer only after core is validated.

## Assemble the Right Team

Most AI projects do not fail because of the model. They fail because the right people were not in the room.

You need four roles, which can overlap in smaller teams:

The domain expert understands the problem deeply and can evaluate whether AI outputs are actually correct — not just fluent.

The technical lead owns integration, data pipelines, and deployment. They know what the infrastructure can support.

The project owner holds accountability for outcomes and keeps the initiative connected to business goals.

The end user representative provides feedback from people who will actually use the system daily. Do not skip this role.

## Choose Tools Based on Constraints, Not Headlines

There is no shortage of AI project management tools promising to overhaul your workflow. The right choice depends on your constraints: budget, data sensitivity, existing infrastructure, and team technical depth.

For most mid-market teams managing their first AI project, a practical stack looks like:

Claude or GPT-4o for generation, summarization, and analysis tasks where you need reasoning depth.

A simple prompt management layer — even a shared Notion doc — before you invest in a dedicated prompt ops platform.

Your existing project management tool (Linear, Jira, Asana) rather than a new AI-native PM tool. The overhead of switching is rarely worth it for a first project.

Evaluate tools on: output quality for your specific use case, data privacy and compliance requirements, ease of integration into existing workflows, and cost at your expected volume.

## Build for Feedback Loops, Not Just Delivery

Traditional software projects ship features and measure adoption. AI projects require ongoing evaluation because model outputs can drift, degrade on edge cases, or fail silently.

Build feedback loops in from the start:

At the task level: Can users flag bad outputs directly in the interface?

At the project level: Are you logging a representative sample of inputs and outputs for weekly review?

At the team level: Is someone responsible for reviewing error patterns and translating them into prompt or pipeline improvements?

A system with no feedback mechanism will slowly erode trust until it is quietly abandoned.

## Avoid the Five Most Common Pitfalls

Accuracy overestimation: Teams often assume AI will be more accurate than it is on their specific data. Run a structured evaluation before committing to a production deployment.

No rollback plan: If the AI system produces a bad output in production, what happens? Define your fallback process before launch.

Missing human review for high-stakes outputs: Not every AI task needs human review, but high-stakes ones do. Map your tasks by stakes and build review checkpoints accordingly.

Underestimating data prep: AI projects almost always require more data cleaning and formatting than expected. Budget twice as much time as you think you need for this phase.

Treating the pilot as the product: A successful pilot proves the concept, not the production system. A pilot that works with twenty users may need significant re-architecture to handle two hundred.

## Measure What Matters

Agree on success metrics before the project starts. Common metrics for AI projects include:

Task completion rate: What percentage of inputs does the system handle without error or escalation?

Time saved per user per week: Measured against the baseline before the AI system was introduced.

Error rate: Tracked over time to catch model drift or edge case failures.

User satisfaction score: A simple weekly rating from end users. Often the most predictive signal for long-term adoption.

Review metrics monthly and share results with stakeholders. AI projects that demonstrate measurable ROI get continued investment. Those that do not get quietly defunded.

## When to Bring in Outside Help

If your team is managing its first AI project without prior implementation experience, a structured engagement with an AI workflow consultant can compress the learning curve significantly — especially in the scoping and evaluation phases where early mistakes are most expensive.

A good consultant does not replace your team. They help you avoid the pitfalls outlined above, accelerate the decisions that take internal teams weeks to reach, and give you a repeatable framework you can apply to future projects.

If you are planning an AI initiative and want a clearer path from problem to production, start by mapping the workflow, risk, owner, and measurement plan. Leaf Lane can help with that kind of practical guidance, and can step into implementation when the project is ready to build.