Harness Engineering Is the New Product Surface for AI Teams

Most teams still talk about AI performance as if the prompt is the product.

That framing is getting outdated fast.

OpenAI's recent harness engineering post is useful because it makes the surrounding system visible. The article describes a team that built and shipped an internal beta product with no manually written application code, using Codex as the execution layer and humans as the steering layer. The interesting part is not the headline. It is the operational model underneath it.

The team did not get better results by finding one perfect prompt. They got better results by building a harness around the agent: repository rules, feedback loops, architecture constraints, review habits, and a system of record the model could actually inspect.

That is the shift business and engineering teams should pay attention to.

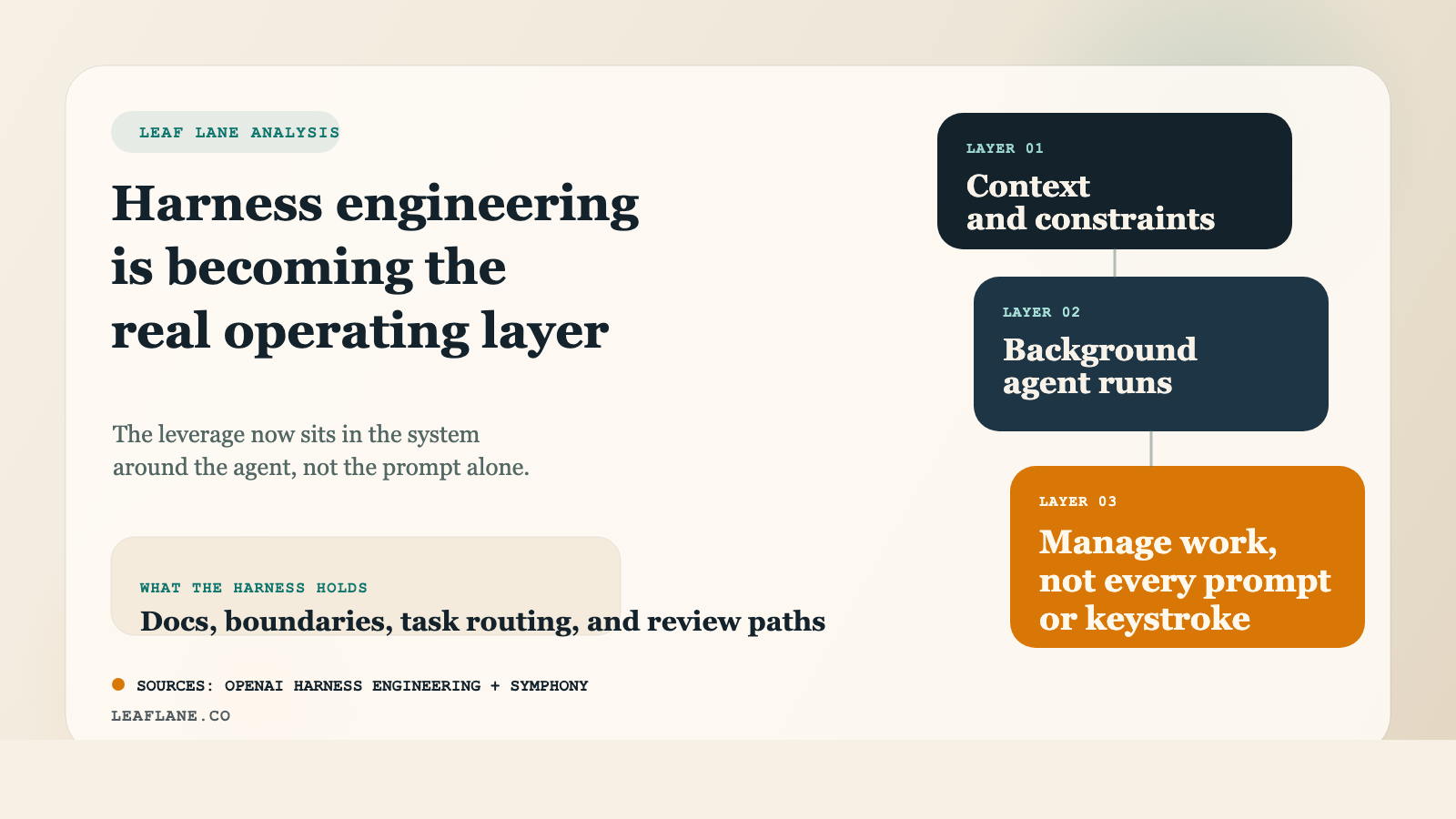

Harness engineering is the work of shaping the environment around an agent so useful behavior becomes easier, safer, and more repeatable. The model still matters. But once models are strong enough, the bottleneck moves. The limiting factor becomes the quality of the system you drop the model into.

OpenAI's write-up makes that concrete in a few important ways.

First, it reframes the engineer's job. In an agent-first workflow, the human role moves away from typing every implementation detail and toward defining intent, constraints, and feedback loops. Humans steer. Agents execute. That does not reduce the importance of engineering judgment. It raises the importance of system design.

Second, it argues for repository legibility over scattered tribal knowledge. If a key architectural decision lives in Slack, in someone's head, or in an undocumented meeting, it is effectively invisible to the agent. OpenAI's point is practical: anything the agent cannot discover in context may as well not exist. For agentic teams, good documentation is no longer just a courtesy to future hires. It is part of the runtime environment.

Third, it shows why strict boundaries become force multipliers. The post describes a setup with explicit layers, custom linters, structural tests, and encoded taste invariants. Human teams often resist that level of structure until a codebase becomes painful. With coding agents, that structure matters much earlier because it keeps speed from turning into drift.

This is where the Symphony project becomes relevant.

Symphony is an open-source background coding agent platform from OpenAI. Its core idea is not just "run an agent in the background." It packages software work into isolated runs so teams can manage work instead of supervising every coding step in real time. That is a harness idea.

The README frames Symphony as a way to start from issues, bug reports, or prompts, hand work to background agents, and layer in review and automation around those runs. In other words, it treats the agent less like a chatbot and more like a worker inside a managed operating environment.

That matters because most organizations are still using powerful models inside weak operating systems. They have access to strong reasoning, but the surrounding workflow is loose. Requirements are underspecified. Context is fragmented. Review rules are inconsistent. Ownership is fuzzy. Verification happens late, if at all. The result is usually disappointing not because the model failed in isolation, but because the harness was too weak.

If you put the OpenAI post and Symphony side by side, a clearer pattern emerges.

The competitive advantage is moving up a level.

It is no longer just about model choice or prompt quality. It is about whether your team can build a stable interface between human judgment and agent execution. That includes:

- discoverable system knowledge

- explicit task boundaries

- structured repository conventions

- verification before high-impact changes

- review paths that improve the next run, not just the current one

- tooling that makes good behavior the default

This is why I think harness engineering will become a more useful term than prompt engineering for many teams. Prompting still matters. But prompting is only one control surface inside a broader operational system. Harness engineering includes prompting, but it also includes memory, tooling, state, permissions, validation, logging, and workflow design.

That broader framing is much closer to how real businesses adopt AI successfully.

The teams getting durable value are usually not the ones chasing novelty. They are the ones turning repeated work into reliable systems. They make expectations explicit. They keep context close to the work. They define what done means. They build review and correction into the loop. Then they let the model move faster inside those boundaries.

For business leaders, the practical question is no longer "How do we get better prompts?"

It is "What harness does this workflow need so the agent can do useful work with less supervision?"

For engineering teams, the question is similar: what parts of your current process exist only in people's heads, and what would happen if an agent had to execute against your system exactly as it exists today?

That is a revealing test.

If the answer is confusion, drift, and constant cleanup, the model probably is not your first problem.

The harness is.

Sources:

OpenAI, "Harness engineering: leveraging Codex in an agent-first world"

https://openai.com/index/harness-engineering/

OpenAI, "Symphony"

https://github.com/openai/symphony/tree/main

If you're ready to invest in your agent infrastructure as a real product surface, [Leaf Lane's services](/services) specializes in exactly this kind of AI systems work.