10 Practical Takeaways from OpenAI's GPT-5.4 Prompt Guidance

OpenAI's prompt guidance for GPT-5.4 is useful because it moves the discussion away from prompt tricks and toward operating discipline. The strongest guidance is not "say this magic phrase." It is "make the task contract clearer, make completion explicit, and make verification part of the workflow."

For teams building assistants, coding agents, or research workflows, that is the important shift. Better prompting is no longer just about style. It is about giving the model a reliable frame for deciding what to do, when to keep going, and when to verify before it acts.

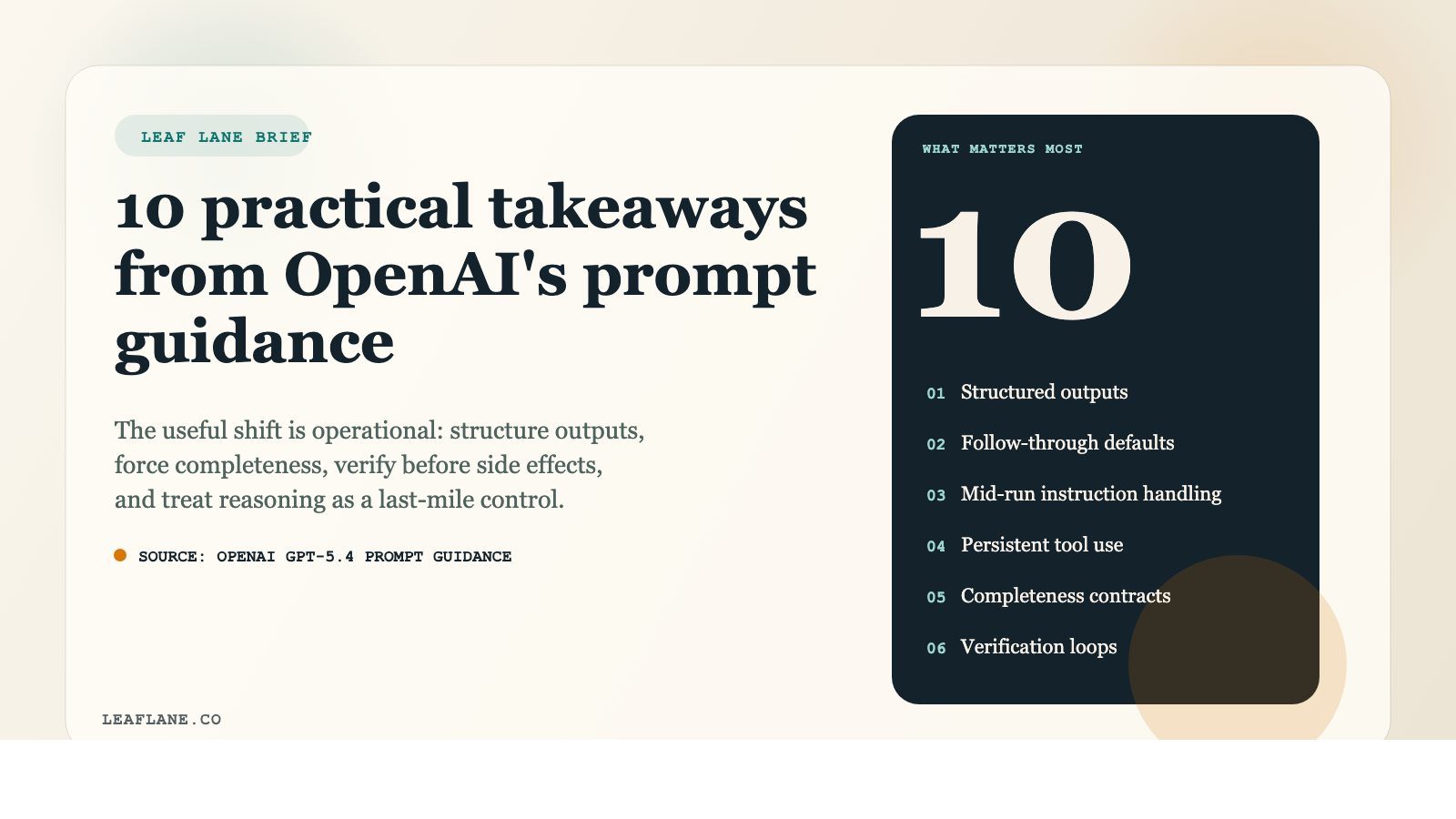

Here are the 10 takeaways that stand out most from the guide.

1. GPT-5.4 is already strong, but explicit prompting still matters.

The guide makes a useful distinction: GPT-5.4 is strong out of the box, especially on instruction following, tool use, and structured tasks. But explicit prompts still matter whenever the work depends on persistence, exact formatting, research discipline, or high-confidence actions. In practice, this means you should not over-prompt everything, but you should absolutely tighten the contract when the task can fail in consequential ways.

2. Compact, structured outputs beat sprawling responses.

One of the clearest patterns in the guide is to keep outputs compact and structured. That matters for two reasons. First, concise outputs are easier to inspect. Second, they are easier to reuse in downstream systems, especially if an agent is handing results to code, tools, or another model step. If you want consistency, define the shape of the answer instead of hoping the model guesses the right format.

3. Give the model defaults for follow-through.

The prompt guidance emphasizes setting clear defaults for what the model should do when the user has not specified every small decision. This is operationally important. Many assistant failures are not caused by bad reasoning. They happen because the model stops early, asks unnecessary clarifying questions, or becomes too literal. Clear default behavior reduces drift and helps the system keep moving.

4. Tell the model how to handle changing instructions mid-run.

Real conversations change. The guide explicitly calls out mid-conversation instruction updates, which is a subtle but important point. If your workflow can change direction while the model is already working, the prompt should tell it how to reconcile new instructions with the old ones. Without that, long-running tasks can continue on the wrong path even after the user's intent has shifted.

5. Tool use needs persistence rules, not just tool access.

This is one of the most practical parts of the guide. Giving a model a tool is not enough. You also need to specify when it must keep using that tool, when it can stop, and what counts as sufficient evidence from tool results. For coding agents, browser agents, and data workflows, this is the difference between a system that acts grounded and one that guesses too early.

6. Long-horizon tasks need explicit completeness checks.

The guide recommends making completion criteria explicit for multi-step work. That is a major takeaway for anyone building agents. If the task spans multiple files, multiple sources, or multiple actions, the model should know what "done" actually means. Otherwise it may stop after the first plausible result. A completeness contract is often more valuable than more reasoning tokens.

7. Verification should happen before high-impact actions.

OpenAI's guidance on verification loops is one of the strongest sections in the document. If the action has meaningful consequences, the model should verify before it executes. That can mean checking a fact, rereading a file, confirming a calculation, or inspecting a tool result one more time. This is less glamorous than clever prompting, but it is how you reduce real-world mistakes.

8. Research prompts should stay locked to retrieved evidence.

For research workflows, the guide pushes a grounded pattern: search first, then cite only what was actually retrieved. That matters because many research failures come from a model smoothing gaps with plausible but weak claims. If you care about trust, prompt for evidence-gathering and citation discipline as part of the task, not as an afterthought.

9. Coding and terminal agents need clear tool boundaries.

The guide's coding recommendations are operationally mature. It treats tool boundaries as a first-class concern: what belongs in the shell, what belongs in file edits, what should be verified, and how the model should communicate progress. This is the right lens for real agent workflows. In coding environments, prompt quality is often a question of execution policy, not wording polish.

10. Reasoning effort is a tuning knob, not a substitute for a better prompt.

This may be the single most important migration takeaway. The guide explicitly frames reasoning effort as a last-mile adjustment. Before increasing it, teams should improve the prompt contract, add verification loops, and define tool persistence and completeness more clearly. That is a disciplined way to tune systems: fix the workflow first, then spend more compute only if evals still justify it.

The larger lesson is that prompt engineering is becoming workflow engineering. The best prompts for GPT-5.4 are not ornate. They are clear about structure, explicit about priorities, grounded in evidence, and realistic about verification. That is a better standard for building reliable AI systems.

If you are updating an assistant or agent stack, this guide is worth reading closely. The biggest gains are likely to come from a better task contract, not a fancier prompt.

Source:

OpenAI, "Prompt guidance for GPT-5.4"

https://developers.openai.com/api/docs/guides/prompt-guidance/

If you're looking for a practical starting point to apply these principles in your own workflow, the [AI Quick Start Guide](/ai-quick-start-guide) covers the fundamentals in a hands-on, business-focused format.