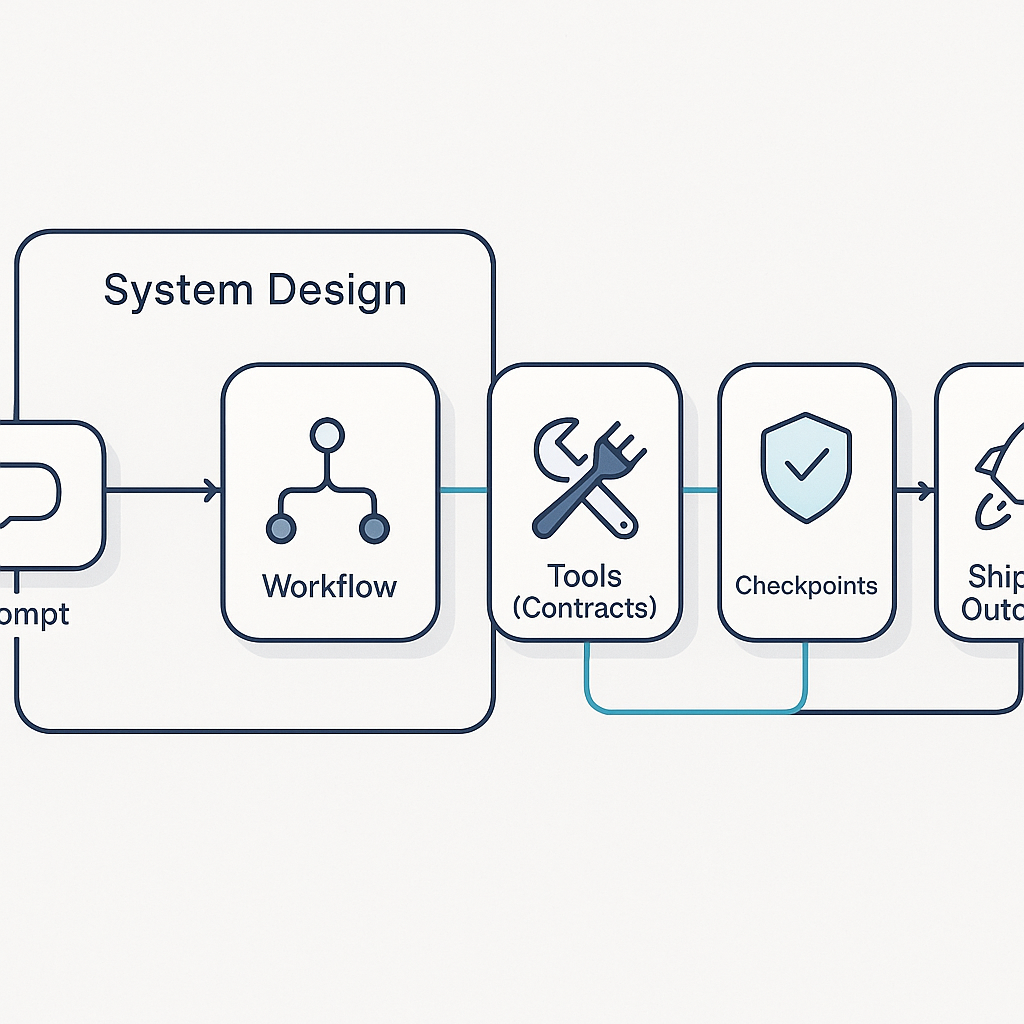

Shipping AI Agents Means Designing the System, Not Just the Prompt

Shipping AI Agents Means Designing the System, Not Just the Prompt

Most teams still evaluate AI progress one prompt at a time. The benchmark is usually whether a single response looks smarter than last week.

That is the wrong unit of progress.

In production, useful AI outcomes come from systems: how work is scoped, how tools are invoked, how long tasks can run, and how output quality is checked before anything reaches a customer or a critical workflow.

A useful way to frame this shift came through recent X discussions around multi-agent research loops and practical Codex execution patterns. The practical lesson is consistent: a stronger model helps, but structure does most of the heavy lifting.

Here are three operating patterns that are proving more reliable than prompt tweaks alone.

1) Move from one-shot requests to staged workflows

A single giant prompt is tempting because it feels fast. In practice, it hides failure points.

A better approach is to break work into explicit stages:

- plan

- gather evidence

- execute

- verify

- publish

This keeps each stage testable. If quality drops, you know whether it was a research issue, a tooling issue, or an execution issue. You also get cleaner retry behavior because you can rerun one stage instead of redoing everything.

Cloudflare’s new Browser Rendering /crawl endpoint is a good example of infrastructure moving in this direction. Instead of writing and maintaining custom crawler orchestration for every project, teams can submit a start URL, run async crawl jobs, and retrieve structured outputs with clearer controls over scope and behavior. That shortens the “build plumbing” phase and lets teams spend more time on validation and decision logic.

2) Design for long-running work with checkpoints

High-value agent tasks often take longer than a single interactive burst. If your system cannot checkpoint, compact, or resume, performance degrades as context grows and operators lose trust.

Recent OpenAI Codex guidance emphasizes this directly: Codex workflows now support longer autonomous runs, and context compaction is a first-class capability for multi-hour task execution. The implication for teams is operational, not just model-level:

- define handoff boundaries

- persist intermediate artifacts

- require verification before completion

If your architecture assumes one uninterrupted run, you will eventually hit avoidable failure modes in cost, latency, or reliability.

3) Treat tool contracts as product APIs

Many agent failures are not “intelligence” failures. They are contract failures.

When tool inputs and outputs are vague, an agent has to guess:

- what format to send

- what counts as success

- what to do on partial failure

Teams that ship consistently document tool contracts the same way they document customer-facing APIs:

- strict input schema

- explicit success and error states

- deterministic fallback behavior

This turns “agent behavior” into an engineering surface you can debug and improve. It also makes multi-agent coordination practical, because each step can trust the shape of the previous step’s output.

What this means for operators right now

If you want better outcomes this quarter, do not start by rewriting every prompt. Start by hardening the system around the prompt:

- define stages

- enforce checkpoints

- tighten tool contracts

- add lightweight verification gates

Prompt quality still matters. But once you are past the prototype phase, operating model quality matters more.

The teams pulling ahead are not just using better models. They are building better rails.

Source notes

- Christine Yip (@christinetyip) on multi-agent autoresearch framing: https://x.com/christinetyip/status/2031867233387810949 and account: https://x.com/christinetyip

- Paul Solt (@PaulSolt) referencing Codex best-practices guidance: https://x.com/PaulSolt/status/2031869479202763142 and account: https://x.com/PaulSolt

- OpenAI Codex Prompting Guide (Feb 25, 2026): https://developers.openai.com/cookbook/examples/gpt-5/codex_prompting_guide

- Cloudflare Browser Rendering /crawl changelog (Mar 10, 2026): https://developers.cloudflare.com/changelog/post/2026-03-10-br-crawl-endpoint/