AI Agents Need an Operating Model, Not Just a Better Prompt

AI agents are getting better fast, but that does not mean they are becoming self-managing businesses in a box. The real shift is narrower and more useful: agents are becoming easier to aim when the work around them is explicit. In practice, the differentiator is rarely the model alone. It is the operating model wrapped around the model.

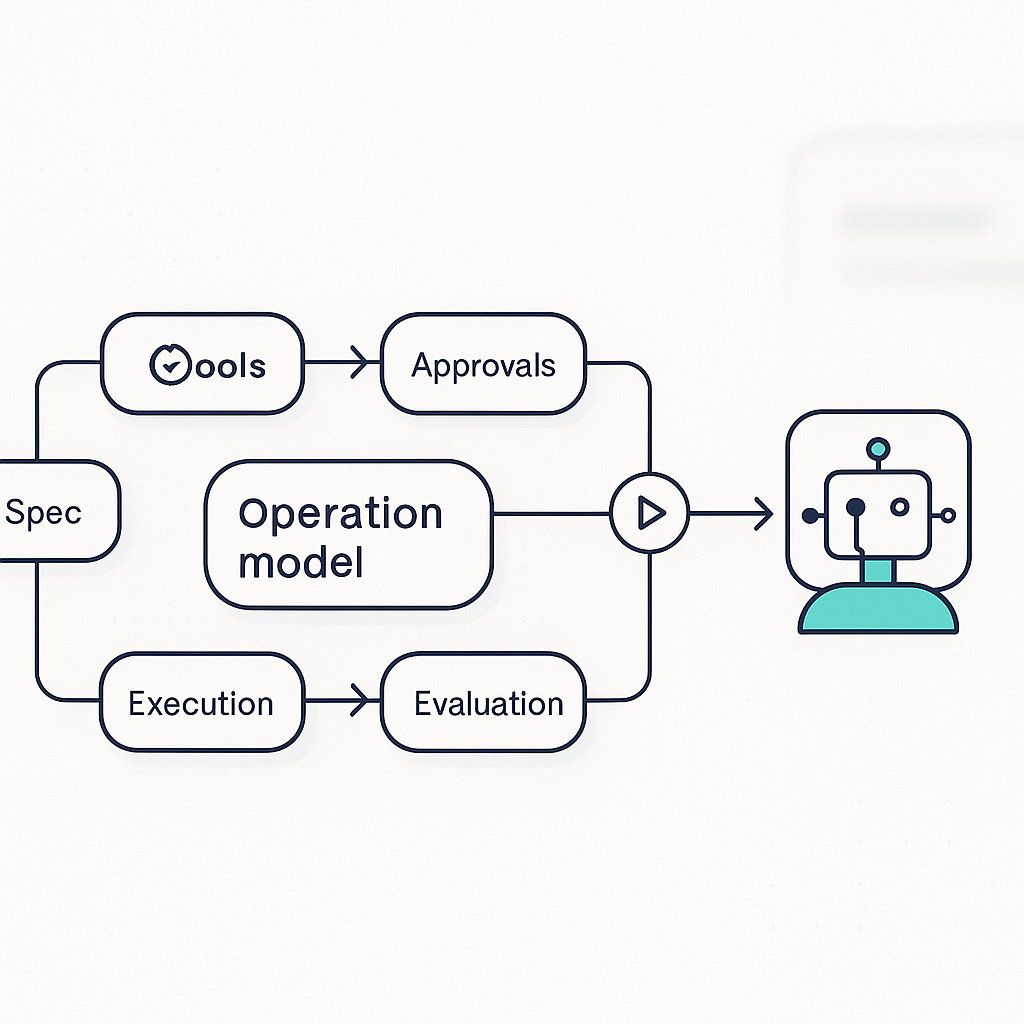

That operating model has four parts. First, someone has to define the job clearly enough that the agent can decompose it. Second, the system needs tools and permissions that fit the task instead of exposing everything. Third, there has to be an approval and verification loop so the agent does not quietly compound mistakes. Fourth, the work needs to leave behind structure: tasks, artifacts, logs, and outcomes that can be reviewed and improved. Without those pieces, even strong models spend too much time guessing what good work looks like.

This is why the most credible guidance from major AI labs no longer treats prompting as the whole craft. Anthropic's guidance on building effective agents centers on workflows, tool use, and incremental composition rather than abstract autonomy. OpenAI's agent guidance lands in a similar place, emphasizing clear tool boundaries, handoffs, guardrails, and evals. That convergence matters. When independent builders and model providers start describing the same pattern, it usually means the bottleneck has moved from raw capability to operational design.

The strongest agent demos now follow that logic. A detailed spec becomes the starting interface. A backlog or task list becomes the memory of the work. Approval steps become part of the product instead of a patch for safety theater. Evaluation is no longer a separate research exercise; it becomes the mechanism that determines whether a run can continue, retry, or stop. In that setup, the prompt still matters, but it behaves more like one component in a system than the magical source of truth.

That distinction is important for any business trying to use agents seriously. If you hand an agent a vague goal like improve our onboarding flow, you are asking it to invent strategy, judgment, and execution criteria at the same time. If you instead provide the context, the constraints, the success metrics, the tools it can use, and the moments where a human needs to approve the next step, the same model suddenly looks much more capable. The model did not become dramatically smarter. The work became dramatically more legible.

This is also the practical answer to the hype cycle around full autonomy. Multi-step agent systems can absolutely produce finished work over long horizons, but only when the environment gives them enough scaffolding to stay aligned with the objective. An agent with no operating model is not autonomous. It is under-specified. That is why so many teams eventually rediscover the same components: typed workflows, checkpoints, retries, audit trails, and constrained tools. These are not bureaucratic add-ons. They are the product surface that makes autonomy usable.

For founders and operators, the implication is straightforward. Do not ask whether your model is advanced enough for agents. Ask whether your work is structured enough for agents. Can a spec be written clearly? Can a task be broken into observable steps? Can tools be limited to what the job actually needs? Can success be evaluated before the output reaches a customer? If the answer is no, upgrading the model will not rescue the workflow. It will just produce faster confusion.

The next wave of useful agent products will not win because they sound the most autonomous. They will win because they make complex work inspectable, interruptible, and repeatable. The market is starting to reward systems that turn intent into a workflow, a workflow into execution, and execution into evidence. That is a much more durable promise than artificial coworkers that simply seem clever in a demo.

In other words, the real product is not the prompt. It is the operating model that turns model intelligence into accountable work.

If you are building a real operating model for AI, Leaf Lane can help turn the idea into clear workflows, governance, and implementation steps that fit the way your business actually works.