Your First AI Workflow Should Have a Kill Switch

Most businesses do not need to start their AI work with a fully autonomous process. They need one useful workflow that can help without creating a mess if something goes wrong.

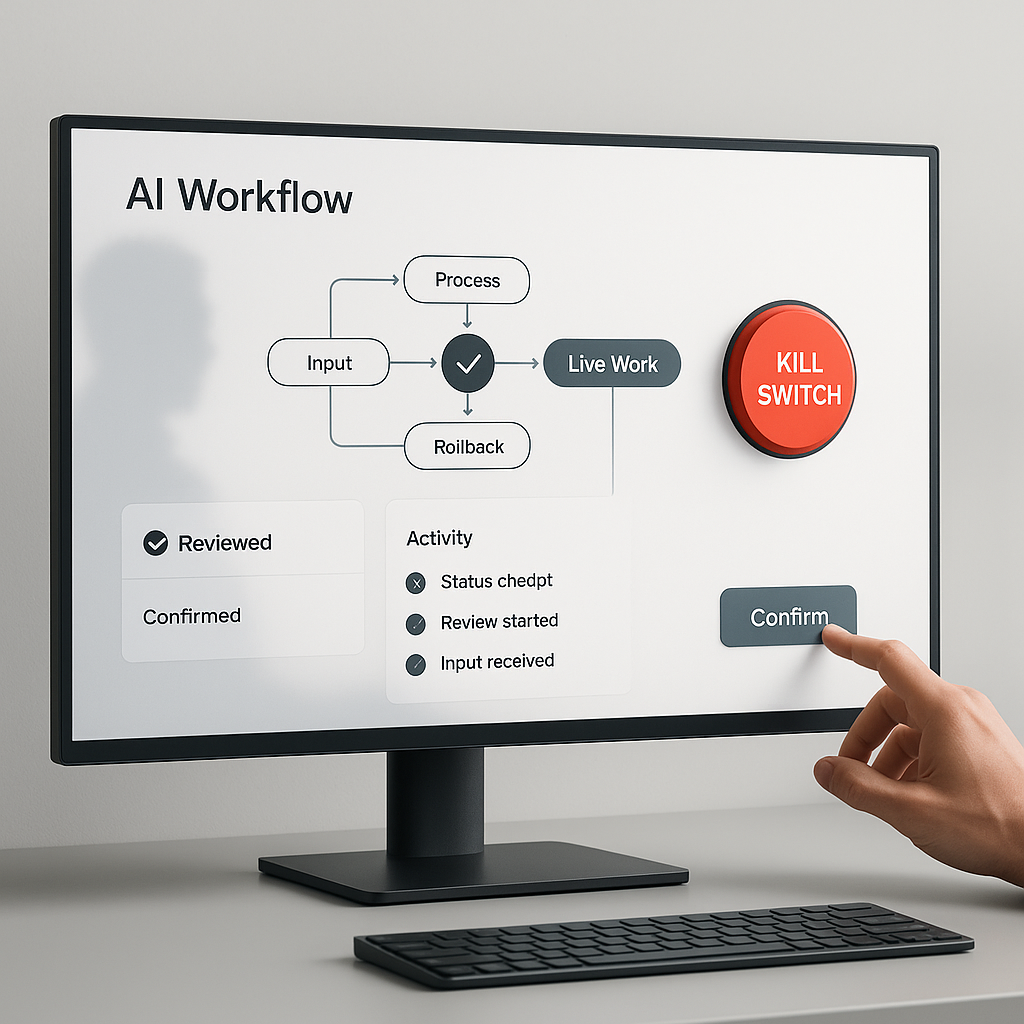

That is why the first serious AI workflow should have a kill switch.

A kill switch is not a dramatic red button. It is a practical operating rule: when the assistant sees a certain risk, reaches a certain boundary, or lacks enough confidence, it stops moving forward and hands the work back to a person.

That simple rule changes the quality of the whole workflow. Instead of asking, "Can AI do this?" the business starts asking, "When should this workflow pause, ask, log, or stop?"

That is the right question before AI touches customer messages, invoices, calendars, CRM records, reports, files, or public content.

Start With One Workflow That Already Has Consequences

The best first candidate is not the flashiest task. It is a repeated workflow where the team already understands the work and where mistakes have visible consequences.

For example:

A customer support assistant drafts replies from recent tickets.

A scheduling assistant finds open times and prepares appointment confirmations.

A billing assistant prepares invoice notes from completed work.

A content assistant drafts and queues posts for review.

A CRM assistant updates deal stages after a sales call.

Each of these workflows can be useful. Each can also create real problems if it acts without the right boundaries. A support reply can promise the wrong thing. A scheduling workflow can double-book a calendar. A billing workflow can use the wrong amount. A content workflow can publish before a human has approved the claim. A CRM workflow can overwrite useful account context.

The point is not to avoid automation. The point is to design the first version so the assistant knows exactly where useful help ends and human judgment begins.

Map the Risk Points Before You Automate

Before building the workflow, list every place where the assistant crosses from analysis into action.

The useful categories are simple:

Read access: What files, emails, records, transcripts, exports, calendars, or dashboards can the assistant inspect?

Write access: What can it change, save, tag, move, schedule, publish, or delete?

External contact: Can it send an email, text a customer, post publicly, or notify a vendor?

Money movement: Can it create an invoice, issue a refund, change pricing, submit an order, or affect payroll?

Customer record changes: Can it update a CRM, support ticket, project board, account note, or booking record?

Public claims: Can it publish content, respond to reviews, update a website, or make statements customers may rely on?

If a step touches one of those categories, it needs a decision rule. Some steps can run automatically. Some should ask for approval. Some should only create a draft. Some should stop completely.

That map is the beginning of the kill switch.

Define the Stop Conditions

A good stop condition is specific enough that the assistant can follow it without guessing.

Weak rule: "Be careful with sensitive data."

Better rule: "If a customer message includes payment details, health information, legal terms, account credentials, or a complaint about money owed, do not draft a final response. Create a summary and escalate to the owner."

Weak rule: "Ask before making changes."

Better rule: "The assistant may tag CRM records and draft follow-up tasks, but it may not change deal stage, owner, invoice status, or customer-facing notes without explicit approval."

Weak rule: "Do not publish anything risky."

Better rule: "The assistant may prepare a draft, excerpt, image, and social copy, but publishing requires a human to confirm the live URL, image, sources, and final status."

The difference matters. Vague safety language makes people feel better. Concrete stop conditions make the workflow safer.

Use Approval Gates Where the Work Becomes Visible

A useful AI workflow can still move quickly with approval gates. The gate just needs to sit at the right point.

For many business workflows, approval should happen before one of these actions:

Sending something to a customer.

Changing a live system of record.

Spending or refunding money.

Publishing public content.

Deleting or overwriting information.

Using data that the customer did not expect to be used that way.

A support workflow might read tickets and draft replies automatically, but require approval before sending. A scheduling workflow might find available windows automatically, but require approval before confirming a meeting. A reporting workflow might clean a spreadsheet and flag exceptions automatically, but require approval before emailing the report to the client.

That is not slowing AI down. It is putting human judgment where the business impact happens.

Log the Evidence, Not Just the Output

A kill-switch workflow should leave a trail.

The log does not need to be complicated. It should answer four questions:

What did the assistant inspect?

What did it produce or change?

What risks, exceptions, or missing inputs did it notice?

Who approved, rejected, paused, or overrode the next step?

This helps the team improve the workflow over time. If the assistant keeps escalating the same type of issue, maybe the rule is too conservative. If people keep correcting the same draft, maybe the prompt or source data is weak. If nobody can tell why a decision happened, the workflow needs better evidence.

The log is also what turns a one-time AI experiment into an operating habit. People do not have to remember what happened. The workflow records enough context to review and improve it.

Plan the Undo Path

Many teams define what the assistant should do and forget to define how a person can pause or undo it.

That creates unnecessary risk.

Before launch, answer these questions:

How can a human pause this workflow?

Where does the workflow record its current state?

If the assistant creates the wrong draft, can we discard it without touching live records?

If it changes a record, can we see the previous value?

If it schedules something, can we cancel it quickly?

If it sends a notification, who sees the mistake and how is it corrected?

The first version of an AI workflow should prefer reversible steps. Draft before send. Queue before publish. Tag before reassign. Suggest before update. Save a copy before overwriting. Use test data before live data.

Reversibility is one of the most practical forms of AI safety a small business can apply.

A Simple Kill-Switch Checklist

Before launching the workflow, write down the checklist in plain language.

A practical version might look like this:

The assistant may inspect: approved inbox labels, customer notes, recent call transcripts, and the scheduling calendar.

The assistant may create: draft replies, task suggestions, summary notes, and proposed appointment times.

The assistant may not: send emails, change invoices, delete records, update deal stages, publish content, or contact customers directly.

The assistant must ask for approval before: saving customer-facing notes, confirming an appointment, marking a task complete, or sending a message.

The assistant must stop and escalate when: information conflicts, customer intent is unclear, sensitive data appears, money is involved, the requested action is outside the approved workflow, or the output would affect a customer without review.

The workflow must log: sources checked, output created, stop condition triggered, approval requested, final human decision, and any correction made afterward.

The human pause control is: stop the scheduled run, revoke the task-specific access, or move the workflow back to draft-only mode.

This is not enterprise governance. It is the minimum viable operating model for AI that touches real work.

How This Becomes a Skill or Automation

Once the checklist works manually, it can become reusable.

For a Codex workflow, the kill-switch rules can live inside a skill. OpenAI describes Codex skills as packages of task-specific instructions, resources, and optional scripts that help Codex follow a workflow reliably: https://developers.openai.com/codex/skills

That means the approval rules, input boundaries, output format, escalation language, and logging requirements do not have to be pasted into every prompt. They can become part of the reusable workflow.

If the workflow becomes predictable, it may later become an automation. OpenAI's Codex automation docs describe recurring tasks that can run in the background, report findings to the inbox, and combine with skills for more complex work: https://developers.openai.com/codex/app/automations

That progression should be earned:

First, run the workflow manually with a human watching.

Second, turn the working method into a skill so the rules are consistent.

Third, automate only the parts that have clear inputs, repeatable checks, and safe stop conditions.

Fourth, review the logs and improve the workflow before giving it more reach.

The mistake is skipping straight to automation because the demo worked once.

What to Review Before Launch

Before you let an AI workflow touch live work, review it like an operator, not like a tool buyer.

Can a normal team member explain what the workflow is allowed to do?

Can they explain when it must stop?

Can they see what it inspected?

Can they review the output before it affects a customer?

Can they pause it without calling a developer?

Can they recover from a bad run?

If the answer is no, the workflow is not ready for more autonomy. It may still be useful as a draft helper, analysis assistant, QA checker, or internal summarizer. That is fine. Useful and controlled is better than impressive and fragile.

The Practical Next Step

Choose one workflow your business already repeats. Do not start by asking how much AI can take over. Start by mapping what the workflow reads, what it writes, where a mistake would matter, and when a person should step in.

Then write the kill switch before you write the automation.

That one discipline makes the first AI workflow easier to trust, easier to improve, and easier to turn into a durable operating system later.