Your Team Is Already Using AI. Give Them Guardrails Before It Spreads.

Most small businesses do not adopt AI all at once.

It starts quietly. One person uses a chatbot to draft emails. Someone else summarizes customer notes. A manager tests an AI meeting tool. A team member uploads a spreadsheet to get help finding a pattern. Before long, the business is already using AI, even if no one has made an official decision.

That is not automatically a problem. Informal experimentation is often how useful workflows are discovered. The problem is when usage spreads faster than the business can answer basic questions: which tools are allowed, what data is off limits, who reviews customer-facing output, how mistakes get reported, and whether anyone knows what is actually being used.

A March 2026 Pax8 Pulse survey of U.S. small and midsize business leaders found that 62% were already using AI, while the company warned that adoption was moving faster than governance, integration strategy, and internal alignment. That pattern is not limited to large enterprises. It is exactly what happens when practical people find practical tools before the business has a practical operating model.

AI governance sounds like something only a large company needs. For a small business, the better word may be guardrails.

The point is not to create a policy binder. The point is to help the team keep moving without guessing where the edge is.

Start with the work, not the policy

A useful AI governance checklist starts by asking where AI is already touching real work.

Look at browser histories, tool subscriptions, shared prompts, meeting tools, support drafts, spreadsheets, customer communications, invoices, and project notes. Ask the team directly where AI is helping and where it feels risky. The first pass should be descriptive, not punitive. If people think the exercise is about catching them, they will hide the very information the business needs.

Once the current usage is visible, sort it into a few simple categories:

Safe to use with normal judgment. This might include brainstorming internal ideas, rewriting generic marketing copy, summarizing public information, or drafting a first version of a non-sensitive internal note.

Allowed with review. This might include customer emails, proposals, sales follow-ups, hiring materials, policy language, financial explanations, or any output that represents the business externally.

Restricted or prohibited. This should include sensitive customer data, regulated information, passwords, API keys, private contracts, medical or legal details, personnel issues, and anything the business would not want copied into a third-party tool without approval.

Needs a separate workflow. Some uses are not wrong, but they deserve structure before they become routine. Examples include customer support replies, appointment scheduling, CRM updates, invoice handling, hiring screens, or analysis that affects pricing and service decisions.

That is already governance. It is not complicated. It is a shared map of what is happening, what is acceptable, and where human judgment is still required.

The minimum viable checklist

A lightweight AI governance checklist for a small business should answer seven questions.

1. Which tools are approved?

Name the tools people can use today. Include who owns each tool, what it costs, what login or account should be used, and whether business data may be entered. This prevents the business from ending up with ten disconnected accounts and no visibility into where information went.

2. What data is off limits?

Write this plainly. Do not upload passwords, private customer records, payment details, confidential contracts, medical information, legal matter details, personnel records, or unreleased financials unless the business has explicitly approved the tool and workflow. If there are industry-specific obligations, name them.

3. Which outputs require review?

Any AI-generated work that reaches a customer, changes a record, spends money, affects a worker, gives professional advice, or represents the business should have a human approval step. The approval does not need to be dramatic. It can be as simple as: a named person reviews the draft before it is sent.

4. What needs to be logged?

You do not need a complex audit system to start. For higher-risk workflows, keep a short record of the tool used, the input source, the draft output, who reviewed it, what changed, and when it was approved. This is especially useful when the business later asks, why did we make that decision?

5. How do people report problems?

Make it easy to say, this output was wrong, this tool exposed the wrong data, this answer sounded confident but was not verified, or this workflow created more cleanup than it saved. If mistakes disappear into embarrassment, the business never improves.

6. What training is required?

Training should start with the actual work people do. A receptionist, bookkeeper, salesperson, project manager, and owner do not need the same AI lesson. Each person needs to know which tasks are approved, which data is sensitive, what review looks like, and when to stop.

7. When will the checklist be reviewed?

AI tools and team habits change quickly. A checklist written once and ignored is not governance. Put a recurring review on the calendar: monthly at first, then quarterly once the business has a rhythm. Review new tools, mistakes, wins, open questions, and workflows that should be tightened.

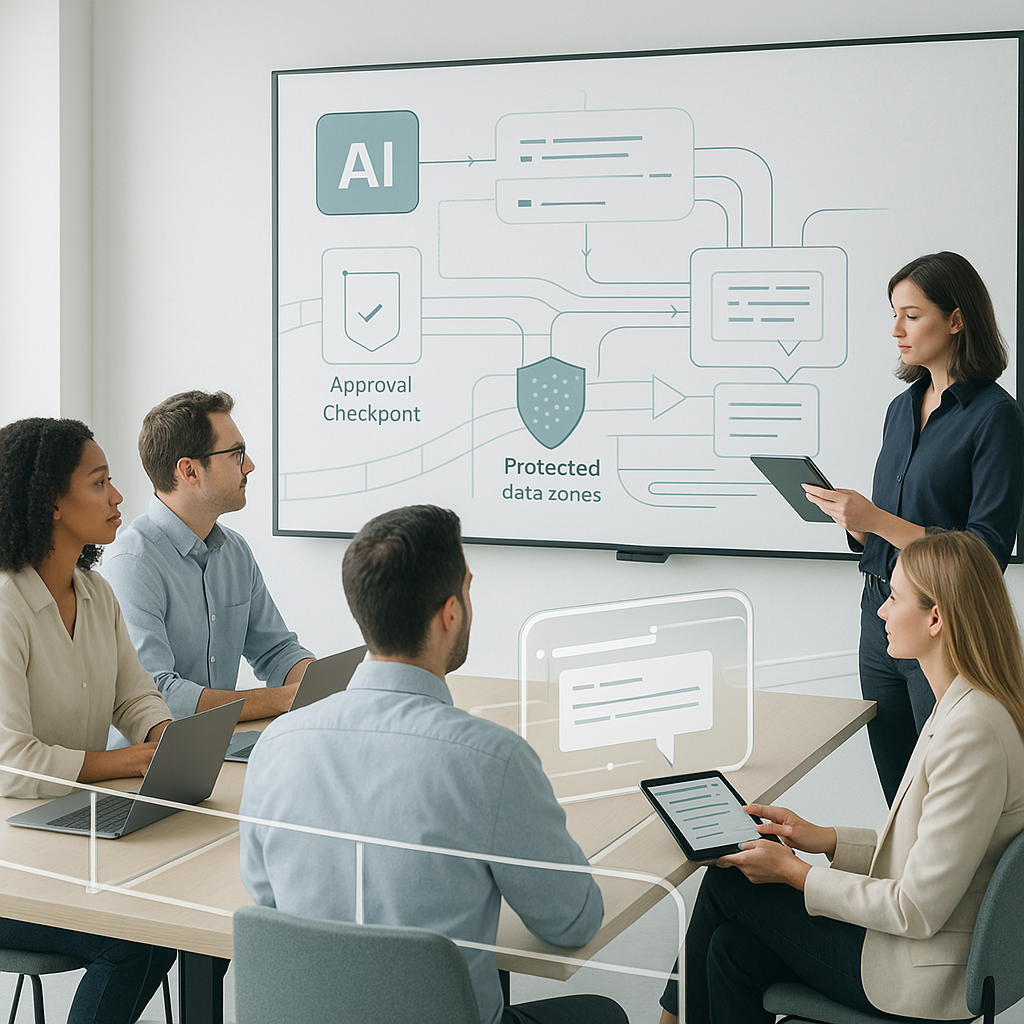

Where human approval belongs

The easiest mistake is to make every AI use feel equally risky. That slows down useful work and still misses the places where risk actually lives.

Human approval should be strongest when AI touches four areas: external communication, sensitive data, business records, and irreversible action.

External communication includes replies to customers, marketing claims, proposals, hiring messages, and anything posted publicly. Sensitive data includes customer details, employee records, financial information, legal materials, and private account access. Business records include CRM entries, invoices, reports, forecasts, policy documents, and project status. Irreversible action includes sending, deleting, publishing, purchasing, signing, refunding, scheduling, or changing permissions.

A practical rule is this: AI can help prepare the work, but a person owns the decision when the result affects a customer, a dollar, a record, a reputation, or a right.

That keeps the policy easy to remember. It also keeps AI positioned correctly. The tool may be useful, but accountability still belongs to the business.

What a real workflow can look like

Imagine a small professional services firm that wants to bring order to scattered AI use.

The starting inputs are simple: a list of current AI subscriptions, a few recent examples of AI-assisted work, team notes about where AI is being used, customer-facing workflows, existing privacy or security policies, and any incidents or near misses.

An AI assistant can review those materials and produce a first-pass governance checklist. It can identify approved tools, likely risky use cases, missing review steps, duplicate subscriptions, training needs, and three policies the business should write first.

The output should not be treated as final policy. It should become a review artifact.

A human owner checks whether the tool list is accurate. A manager confirms whether the workflow examples match reality. Someone responsible for privacy, finance, legal, or operations reviews the sensitive-data rules. The team then decides which guardrails are effective immediately and which require more work.

The final artifact might include:

Approved AI tools and owners.

Prohibited data categories.

Review gates for customer-facing work.

A short issue-reporting path.

A lightweight usage log for higher-risk workflows.

A 30-day training plan by role.

The first three policy drafts to create.

That is enough to reduce confusion without pretending the business has solved every AI risk.

How this can become a repeatable skill or automation

Once the checklist has been reviewed once, the repeatable parts can become a stronger workflow.

For example, a business could create a reusable Codex skill that tells the assistant where to look, which files to inspect, which policies matter, how to format the checklist, and which items must always be escalated for human review. OpenAI's Codex skills documentation describes skills as reusable packages of instructions, resources, and optional scripts that help Codex follow task-specific workflows reliably: https://developers.openai.com/codex/skills

A later automation could run on a schedule to check for new tool subscriptions, recent AI usage notes, policy changes, unresolved incidents, or workflows that have moved from experiment to routine. OpenAI's Codex automation docs describe recurring background tasks that can report findings to the inbox and combine with skills for more complex work: https://developers.openai.com/codex/app/automations

The automation should not silently approve new tools or rewrite policy. It should surface changes, evidence, and recommended decisions. The owner still decides what is allowed.

This is where small businesses can get a real advantage. They do not need an enterprise governance office to start. They need a short checklist, a visible owner, a review rhythm, and a way to turn lessons into better instructions over time.

A good first-week version

If AI usage is already spreading through your team, do not wait for the perfect policy.

This week, write down the tools people are allowed to use. List the data they should not enter. Pick the outputs that require review. Create one place to report mistakes. Review the checklist with the team. Then schedule the next review before the habits drift again.

NIST's AI Risk Management Framework is voluntary and use-case agnostic, and it frames AI risk management as something organizations can adapt to their own size, sector, and capacity. That is the right mental model for small businesses. You do not need to copy an enterprise program. You need to make the risk visible enough to manage.

AI adoption is already happening in many businesses. The choice is whether it spreads through guesswork or through a few clear rules that help people use it well.

Sources used:

Pax8 Pulse, March 2026 SMB AI adoption survey: https://www.pax8.com/en-us/news-post/new-pax8-research-reveals-small-businesses-are-adopting-ai-faster-than-theyre-building-strategies-to-manage-it/

NIST AI Risk Management Framework overview: https://www.nist.gov/itl/ai-risk-management-framework

NIST AI RMF 1.0 publication: https://www.nist.gov/publications/artificial-intelligence-risk-management-framework-ai-rmf-10

Grant Thornton AI Impact Survey, April 2026: https://www.grantthornton.com/insights/press-releases/2026/april/grant-thornton-survey-on-ai-proof-gap

OpenAI Codex skills documentation: https://developers.openai.com/codex/skills

OpenAI Codex automations documentation: https://developers.openai.com/codex/app/automations