Give AI Assistants Access by Task, Not by Trust

AI assistants are starting to move from chat windows into real work. They can run commands, inspect files, draft replies, update systems, and help teams move faster.

That makes access the business problem.

A person can understand context, pause when something feels risky, and ask for permission before crossing a line. An AI assistant needs those boundaries designed around it. If the only way to make the assistant useful is to hand it a full .env file, a shared password manager, or a broad admin session, the workflow is not ready yet.

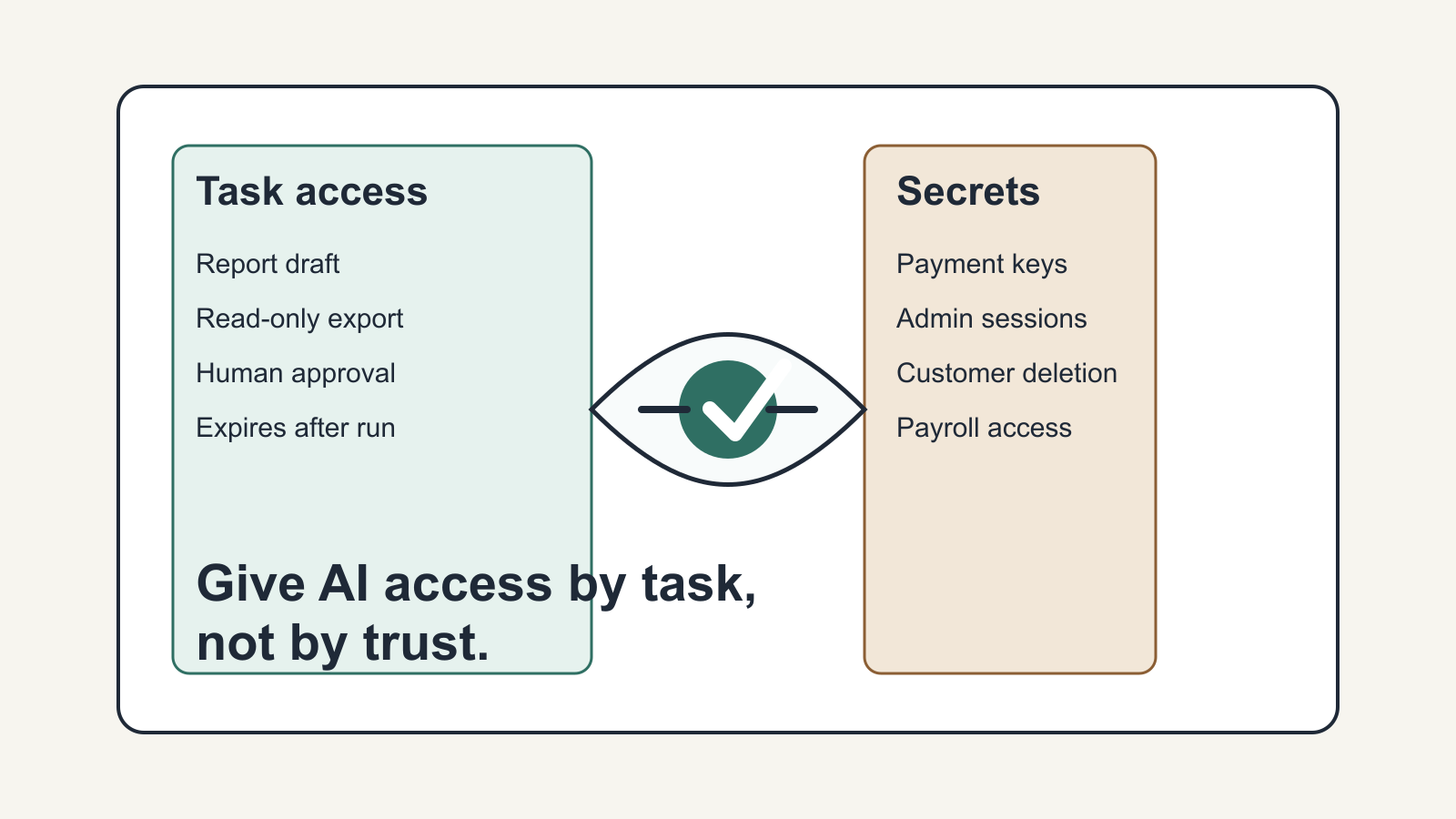

The better question is not, "Do we trust the AI?"

The better question is, "What exact access does this task require, for how long, and what should remain impossible?"

Start With the Job, Not the Tool

A small business does not need a perfect security architecture before using AI. It does need a clear picture of the job.

For example, an assistant helping with a weekly report may need read-only access to a spreadsheet export, a reporting template, and a folder where it can save a draft. It probably does not need payroll access, Stripe refund permissions, customer deletion rights, or every production secret.

An assistant helping with customer follow-up may need recent support messages and a place to draft replies. It does not need the ability to send final emails without review unless that has been intentionally approved.

An assistant helping with a website update may need repository access, a test command, and a deployment checklist. It does not automatically need live payment keys.

This sounds basic, but it is where many AI workflows become risky. Teams jump from "the assistant can help" to "the assistant can reach everything" because broad access is easier to set up than narrow access.

Convenience is not the same as a usable operating model.

Use Task-Scoped Access

Task-scoped access means the assistant receives only the inputs and permissions needed for the current job.

That can be as simple as giving it a copied export instead of a live database connection. It can mean using a test account instead of an owner account. It can mean creating a separate API key with limited permissions. It can mean requiring human approval before any action that sends money, changes customer data, publishes content, or updates production systems.

The pattern is practical:

Define the task.

List the systems involved.

Separate read access from write access.

Decide what the assistant may do directly.

Decide what must come back to a person for approval.

Remove or expire access when the task is done.

This is not only a security habit. It also makes the workflow easier to troubleshoot. When an assistant has a narrow job and narrow permissions, mistakes are easier to see, reproduce, and fix.

Secrets Need a Different Standard

Passwords, API keys, signing keys, database credentials, payment credentials, and customer-data tokens should not be treated like ordinary context.

They are not just information. They are authority.

A helpful assistant may need to run a command that depends on a secret, but that does not mean it needs to see the secret itself. Newer developer tooling is starting to explore this distinction: keep secrets encrypted or locally controlled, inject only approved values into the process that needs them, and limit access by session, policy, or command.

The important lesson for a business owner is not that one specific vault tool is mandatory. The lesson is simpler: if an AI workflow needs sensitive credentials, design the credential path as part of the workflow. Do not paste the secret into a chat. Do not leave production keys in a random working folder. Do not give every assistant the same broad access because it worked once during a demo.

A useful AI setup should make the safe path easier to repeat.

What This Looks Like in a Real Business

Imagine a business wants an AI assistant to prepare a weekly sales summary.

A loose setup might give the assistant access to the CRM, accounting system, email inbox, and analytics dashboard under an owner login. It works in the demo, but it creates a fragile and risky dependency. If the assistant misunderstands the task, follows stale instructions, or exposes context in the wrong place, the blast radius is large.

A better setup starts smaller.

The CRM exports the needed fields to a reporting folder. The assistant reads that export, checks it against a simple rule list, drafts the summary, and flags missing or suspicious values. If a live CRM query is eventually needed, it uses a dedicated read-only credential. If the report should be sent to clients, the assistant drafts it and a person approves the send.

The result may feel less magical, but it is much easier to trust.

You can inspect the inputs. You can explain the permission boundary. You can repeat the process next week. You can hand it to another team member without saying, "Just be careful."

The Buying Lens

This is also a useful way to evaluate vendors, consultants, and internal AI projects.

When someone proposes an AI assistant, ask what access model comes with it.

What systems does it need?

Which permissions are read-only?

Which actions require approval?

Where are secrets stored?

Can access be revoked cleanly?

Is there a log of what happened?

Can the workflow run with test data first?

What happens if the assistant produces the wrong instruction or takes the wrong action?

Good answers do not need to be complicated. They need to be specific.

A vendor who can explain access boundaries, approval gates, and rollback paths is usually thinking about implementation. A vendor who only says the assistant is "secure" or "private" without showing the workflow is asking you to trust a label.

Trust the workflow more than the label.

A Simple Starting Rule

Before giving an AI assistant access to a system, write one sentence:

"This assistant may use this access to do this task, but it may not do these things."

If that sentence is hard to write, the workflow is not scoped tightly enough yet.

AI assistants will keep getting more capable. That does not remove the need for access design. It makes access design more important.

For small businesses, the goal is not to block useful automation. The goal is to make useful automation repeatable, reviewable, and limited enough that one mistake does not become a business-wide problem.

That is where AI starts to become operational support instead of another risky experiment.

Source notes

This article was developed from archived X bookmark material, including Dave Blumenfeld (@dblumenfeld) discussing a local encrypted vault pattern for AI agent secret access: https://x.com/dblumenfeld/status/2031757313481335103. The related Keypo signer repository describes encrypted macOS secret storage, scoped vault execution, session limits, and audit logging: https://github.com/keypo-us/keypo-cli/tree/main/keypo-signer.