When a Repeatable Workflow Should Become a Skill and Automation

Most businesses do not need more one-off AI experiments. They need the useful ones to become repeatable.

That is the moment many teams miss. Someone uses Codex to clean up a report, inspect a folder of client notes, triage an inbox, test a checkout flow, or draft a follow-up plan. The result is useful. Everyone agrees it saved time. Then the workflow disappears back into memory, because the team never turns the successful run into a process.

The practical question is not simply, "Can AI do this task?" A better question is, "If this worked once, what would it take to make it reliable enough to use again next week?"

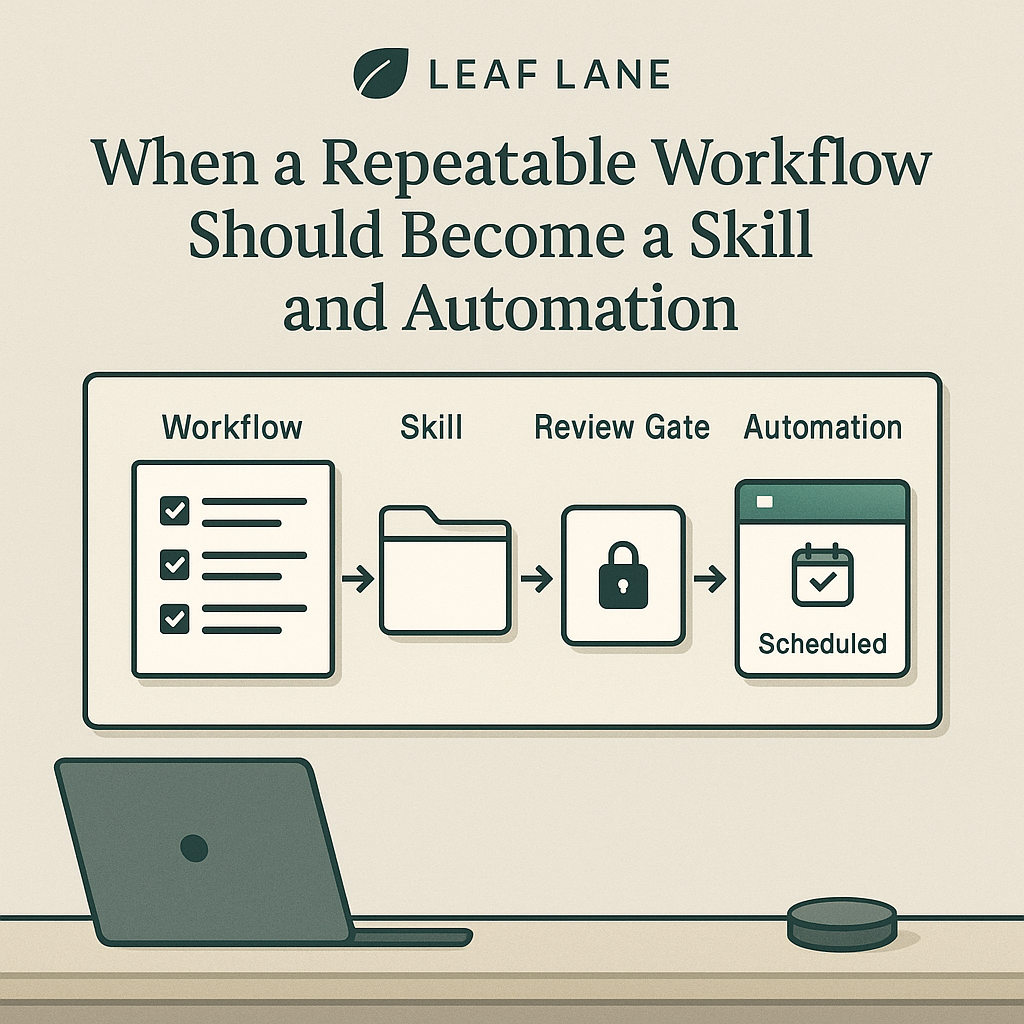

That is where the progression from prompt, to workflow, to skill, to automation matters.

Start With the Completed Workflow, Not a Blank Prompt

A reusable skill should not start from a clever instruction. It should start from a real piece of work that already happened.

For example, imagine a professional services firm runs a weekly client health check. The first version might be a normal Codex session: inspect the client tracker spreadsheet, review recent client emails, find accounts with no touch in 14 days, identify overdue deliverables, and draft follow-up tasks for a manager to approve.

If the output is useful, the team should save more than the final answer. They should save the operating shape of the work:

What files, apps, or records were inspected?

What counted as a risk?

Which signals mattered most?

Which items were ignored because they were noisy?

What did the human reviewer approve, reject, or correct?

What format made the result easy to act on?

Those details are the difference between "ask AI to check clients" and a workflow someone can trust.

Turn the Workflow Into a Skill

OpenAI describes Codex skills as task-specific packages that can include instructions, resources, and optional scripts so Codex can follow a workflow reliably: https://developers.openai.com/codex/skills

For a business team, that means a skill is less like a magic prompt and more like a lightweight operating manual for an AI-assisted task.

OpenAI also lists Codex use cases that fit this same pattern, including analyzing datasets and reports, managing inbox work, querying tabular data, QA with Computer Use, and saving repeatable workflows as skills: https://developers.openai.com/codex/use-cases

A good skill for the client health check might include:

Trigger conditions: run every Monday morning or before a client success meeting.

Required sources: the client tracker, recent emails, open project notes, overdue task list, and any account-risk rules.

Step-by-step instructions: inspect the tracker first, then email, then deliverables, then summarize exceptions.

Definitions: what counts as no recent touch, overdue, blocked, waiting on client, or needs manager review.

Output format: a short list of accounts needing action, with owner, reason, evidence, and suggested next step.

Safety rules: do not send emails, change CRM records, or mark tasks complete without approval.

Review checklist: ask a person to approve any outbound message, client-status change, or escalation.

The skill does not remove judgment. It preserves the parts of the workflow that should not depend on someone remembering exactly how last week went.

Decide What Can Become an Automation

Automations are a separate decision. OpenAI's Codex app documentation says recurring tasks can run in the background, add findings to the inbox, and combine with skills for more complex work: https://developers.openai.com/codex/app/automations

That does not mean every useful skill should run on a schedule.

A workflow is a better automation candidate when the trigger is predictable, the source data is available, the output can be reviewed later, and the consequences of a false positive are manageable.

The weekly client health check is a good candidate because it can run in the background and report only exceptions. If three accounts need attention, a manager can review the evidence and decide what to do. If nothing needs attention, there is no reason to interrupt the team.

A workflow is a weaker automation candidate when it depends on live negotiation, sensitive judgment, incomplete context, or immediate external action. In those cases, a skill can still help. The team can invoke it when needed, keep the steps consistent, and leave the timing to a person.

Keep Human Approval Gates Explicit

The most important part of this system is not the schedule. It is the approval gate.

A skill can gather evidence, compare records, draft recommendations, and format the result. An automation can run that process on a recurring basis. But the team still needs to decide where human approval belongs.

Useful approval gates often include:

Before contacting a customer.

Before changing money, pricing, invoices, refunds, or payment status.

Before updating legal, HR, financial, or compliance records.

Before deleting, archiving, or overwriting source data.

Before escalating a relationship-sensitive issue.

Before publishing public content.

These gates should be written into the skill itself. If the automation runs later, it inherits the same boundary.

That is how a business gets the benefit of repeatability without pretending the software should own every decision.

What the Inputs and Outputs Should Look Like

A mature reusable workflow has boring, specific inputs and outputs.

For the client health check, the inputs might be:

A spreadsheet export of active clients.

A folder of meeting notes.

Recent email threads from the last 14 days.

A list of open deliverables.

A short business-rules note that defines risk signals.

The outputs might be:

A manager-ready exception report.

A table of accounts with no recent touch.

A list of overdue deliverables with evidence.

Draft follow-up tasks, not automatically assigned tasks.

Draft email language, not automatically sent messages.

A short "nothing to report" note when no action is needed.

That last output matters. Good automations should not manufacture drama just because they ran. Sometimes the most useful result is a quiet confirmation that there is nothing new to review.

Make the First Version Small

The mistake is trying to turn an entire department into an automation. The better path is to package one narrow workflow.

Start with a task that already has a rhythm:

Weekly client health review.

Monthly reporting check.

New lead triage.

End-of-day handoff.

Content publishing checklist.

Invoice exception review.

Customer feedback routing.

Lightweight QA on critical site flows.

Run it manually with Codex a few times. Improve the instructions. Add the missing definitions. Notice where the human reviewer keeps correcting the output. Only then decide whether it deserves a skill, and only after that decide whether a scheduled automation makes sense.

A Practical Rule of Thumb

A workflow is ready to become a skill when three things are true.

First, the task has happened enough times that the steps are no longer mysterious.

Second, the useful output has a stable shape: a report, checklist, task list, draft, exception log, or decision brief.

Third, the risk boundaries can be stated clearly enough that Codex knows what it may inspect, what it may produce, and what it must leave for a person.

A workflow is ready to become an automation only when the timing is predictable and the output can be reviewed before anyone acts on it.

That is the practical progression: prove the workflow once, document the pattern, package it as a skill, then automate only the parts that are stable enough to run in the background.

Leaf Lane's view is simple: useful AI work should become clearer and more repeatable over time. A good first prompt can save an hour. A good workflow can save that hour again. A good skill can help the team run the workflow consistently. A good automation can make sure the right review appears at the right time, without asking people to remember another recurring task.