Skills All the Way Down: How I Used Codex to Build a Repeatable Content System

Most writeups about AI workflows skip the part that matters most in practice.

They show the finished output, mention the model, and leave out the middle where the workflow gets built, breaks, gets refined, and slowly becomes dependable enough to use again.

That middle is the useful part.

This article is about one practical lesson: the biggest gains rarely come from a better one-time prompt. They come from turning repeated work into reusable instructions, then improving those instructions after real runs.

## The problem with one-off prompting

A lot of AI work starts as improvisation.

You try a prompt. It kind of works. You try again. It works differently. You paste more context. You manually clean up the result.

That can be fine for occasional tasks. It becomes a bottleneck when the task repeats.

If the process has to be rediscovered every time, the value does not compound.

## What a better workflow looks like

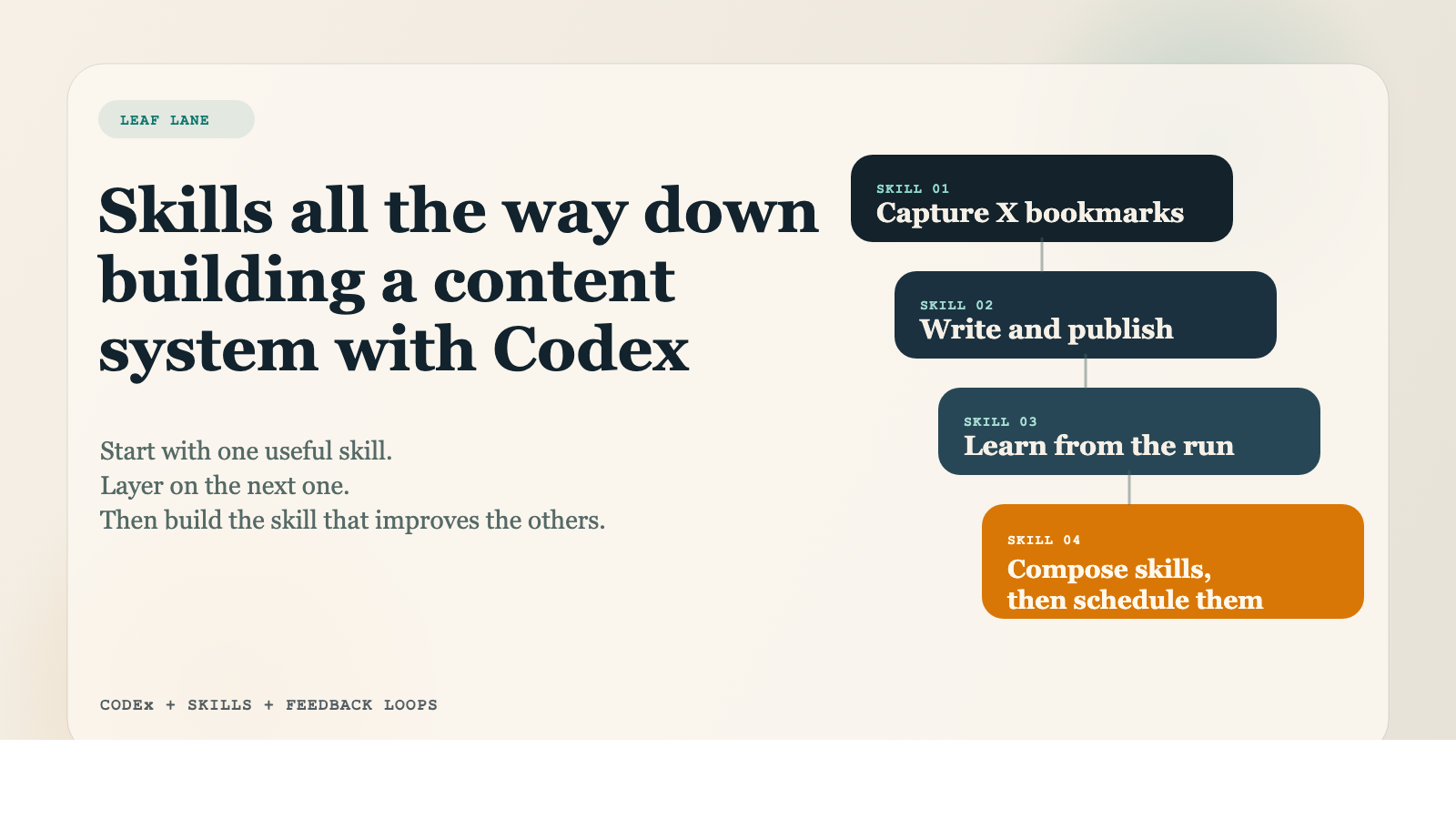

A stronger pattern is to break the job into reusable parts.

In our case, the first issue was not writing. It was source capture.

Bookmarks and saved links were acting as a holding area for ideas, but not as a real system. Useful material piled up, context got lost, and the same items stayed in the queue too long.

The fix was to create a repeatable capture step: gather the source, summarize it, preserve the context, and store it somewhere stable so it can actually be used later.

Once that step existed, the next step became clearer: turn that source material into a draft, then package it for publication and distribution.

That is the broader lesson. When a workflow repeats, break it into stages that can be inspected and improved separately.

## Why reusable skills matter

Reusable skills are useful because they capture operational judgment, not just tool access.

A good skill tells the system:

- what the job is

- what steps matter

- what to verify

- where the source of truth lives

- what success looks like

That matters because the goal is not to make the model sound impressive. The goal is to make the workflow more consistent.

## What real runs teach you

The most valuable lessons usually appear only after the workflow touches real systems.

In our case, the live run surfaced practical issues that would not have shown up in a polished mock example:

- distribution steps behaved differently by platform

- media handling needed to happen at a more granular level than expected

- formatting assumptions that looked fine in a draft did not match the CMS renderer

- some parts of the flow needed clearer completion rules and better logging

These are not glamorous insights. They are the kind that actually make a system more useful.

## The compounding behavior to look for

A workflow starts to become valuable when each run improves the next one.

That usually means asking better questions after the run:

- what should become reusable?

- what should be logged?

- what failed because the instructions were unclear?

- what should be checked automatically next time?

- what still requires human review?

If those questions never get answered, you are not building capability. You are just repeating an experiment.

## A practical way to apply this

If you want to build a repeatable AI workflow in your own business, start small.

Choose one recurring task that already happens often enough to matter.

Break it into stages.

Write down the source of truth, the output needed, the review step, and the failure points.

Then run it, inspect it, and update the workflow before trying to scale it.

That is usually a better path than trying to automate an entire department in one move.

## The bottom line

The useful shift is not from "manual work" to "fully autonomous work."

It is from one-off prompting to repeatable operating procedures that can improve over time.

That is where AI starts to feel less like a novelty and more like practical support for real work.

If you want a structured way to map those repeatable workflows inside your own business, the [AI Quick Start Guide](/ai-quick-start-guide) is a practical starting point.